INTRODUCTION

by

Adil KABBAJ

INSEA, Rabat, Morocco

From Intelligent Systems to Intelligent Agents

Intelligent Systems using Amine Platform

A (sequential) map for a visit to

Amine Platform

Intelligent

Systems: A brief historical overview

Intelligent System has

a polysemic meaning; it is used in different contexts

to refeer to various types of information systems. To

get an idea of what we mean by Intelligent System, we propose to consider the

development of its meaning in Artificial

Intelligence (AI); the Science of

Intelligent Systems, and Cognitive

Science; the Science of Cognitive

System. Basically, we can identify four periods concerning the

development of Intelligent Systems, each period provides and explores different

types of intelligent systems.

Note: the

following presentation is very informal and without references. Also, the

number and the timing of periods are suggestive only. The aim is to provide a

historical canvas for presenting briefly the rapid development of Intelligent

Systems in AI and Cognitive Science.

1.

Period

1 (1956 to 1970), Exploration of

intelligent processes and development of the first intelligent systems:

first exploration of various intelligent (mental) processes: problem solving,

games, theorem proving, reasoning, natural language processing and learning.

Many programs were developed in this period. The main conclusions were: a) intelligent processes are very complex, b) the need and importance of knowledge, c) the need and the importance of meta-knowledge (knowledge

about knowledge), d) the importance of

"search space" paradigm.

2. Period 2 (1970 to 1986), Intelligent

Systems are Knowledge, Meta-Knowledge and Intentional-Based Systems

:

It became evident that knowledge should

constitute the kernel of any intelligent system; intelligent

systems are Knowledge-Based

Systems. The dominant definition of AI at that time was that

"AI is the science of Knowledge-Based Systems".

Main topics emerged: knowledge structures, "meaning" of knowledge

(what we call ontology actually), knowledge representations, operations on

knowledge structures, meta-knowlege, context for knowledge and related issues such as background knowledge and common

sense. There was also the necessity to consider various intelligent

processes as knowledge-based processes. Semantic

Networks and Conceptual Structures, such as Script, Schema, Frame,

Prototype and Scenario were proposed and used to deal with the fuzzy and

complex notion of context. Also, the complexity of background knowledge and common

sense, and the complexity of conceptual structures and intelligent processes

became more and more insistent. To accommodate this complexity, researchers

subdivided intelligent processes to many subprocesses:

for instance natural language processing was subdivided into morphology,

lexicology, parsing (syntax), understanding (semantics and pragmatics),

generation, interaction (dialog), etc. However, researchers were more and more

aware that not only subprocesses but also processes

are highly related and dependent. For instance, to develop a "real"

natural language processing system, we need to consider all language levels

(morphology, lexicology, syntax, semantic, pragmatic). Moreover, natural

language processing is based on inferences, problem solving and planning. Behavior or planning is based also on inference/reasoning,

on knowledge and on natural language processing (if the behavior

involves interaction and communication in natural languages). Thus, the need for a systemic and integrated approach became

insistent. Other knowledge related issues were also considered:

truthfulness, certainty and modality of knowledge, relation of knowledge to

belief, to time, to intention, to knowledge inference strategies, to knowledge

acquisition, etc. Another important topic at this time was that intelligent systems are Intentional

Systems, i.e. knowledge of the world and knowledge about

actions, plans and goals is used by an intelligent system to achieve its intentions or goals (and also to react to the envionment, this can be viewed as a goal). Another

important aspect that became more and more important is the modularity of knowledge: even if knowledge is

highly connected, there should be some mechanisms to form chunks of knowledge that are used in specific

contexts. This aspect was captured by the distinction made between the whole

context (ultimately the memory) which is considered as a huge semantic network,

and conceptual structures which are conceptual units or chunks

of knowledge. Another important discovery of this period concerns the

relation between structures and processes: in computer science (from its begining to the eighties), there was a clear distinction

between data (structures) and procedures (actions, procedures, functions,

processes or treatment/behavior in general). This

distinction was "inherited" by AI, but in computer science as well as

in AI, researchers were aware more and more that this sharp distinction between

the two aspects of information (descriptive vs

prescriptive information) is not mandatory. Researchers started to model dynamic objects; objects with both descriptive vs prescriptive information. In AI, Frame (proposed by Minsky in 1975)

was the main representative of dynamic object. But another important

formulation of dynamic object, proposed by Norman, Rumelhart

and the LNR group in their important book "Explorations in Cognition,

1975", was their active semantic networks.

These two formulations of dynamic objects followed two different paths: Frame

was defined basically as a set of roles, each role has a filler. In the first

versions of Frame, a filler corresponds to a data (simple or structured) with

some attributes and procedures that can be activated by different ways. The structural part of such a Frame was the slots and

the attributes of the fillers. The behavioral part was the procedures attached to the fillers.

Later (in the eighties), it became clear that a filler can be itself a Frame.

Hence, the simple structure of a Frame evolved to a network-like structure

where fillers correspond to nodes of a network, slots to links/relations

between fillers and the filler's attributes and procedures correspond to

properties attached to fillers/nodes. Frame becomes in this way a kind of

active semantic network !! Let us consider now the

development of ASN of the LNR group. To understand this development, note that

Norman (and most of the members of the LNR group) was a cognitive psychologist

and a leader of the emerging cognitive science. He was interested in human

cognition and the LNR group developed intelligent systems to modelize, understand and analyze

human cognition. One main topic that became central in the emerging cognitive

science in general and for the LNR group in particular turns around the

following question: if human cognition is based on knowledge representation in

general and on ASN in particular, how these networks are

"represented" in the brain, if they are represented in the first place !? Smoothly, the group shifted from active semantic networks to (active) neural networks (neural networks

are active by definition) !! From the implementation

of ASN on computers, the group shifted to the (eventual) implementation on

neural networks. And the problem in fact was not how to implement ASN with

neural networks but how ASN could emerge

from neural networks (in the sense that at the cognitive level, neural network

activity can be "viewed as" an ASN). This shift of interest

concerning the LNR group had its impact on AI: in the eighties, great attention

and emphasis were made by AI researchers on neural networks and "distributed knowledge and knowledge without representation".

The LNR group was "replaced" by the PDP group (Rumelhart

worked with McClelland) and the book "Parallel Distributed Processing,

1986" marks the official beginning of "neural networks era".

Rumelhart, with the PDP group, focused on "low

cognition" while Norman followed his work on "high cognition".

Active Semantic Networks, as proposed by LNR group, was not developed

further.

The period 1980-1985 was the foundation period of Cognitive

Science (or Science of Cognition), a multi-disciplinary new science

(cognitive psychology, AI, linguistics, philosophy) that focuses on cognition.

Several books written by authors from the involved disciplines, were proposed

as a foundation for this new science. One of these books was the book of John Sowa,

"Conceptual Structures : Information

Processing in Mind and Machines, 1984" In this book, Sowa proposed Conceptual Graph (CG) Theory as a

foundation for "high cognition". CG theory is a synthesis from

several fields: semantic networks and related topics in AI, logic (Sowa showed

the equivalence of CG logic and predicate logic and he used Existential Graph

Logic of S. C. Peirce as a logical foundation for CG theory), linguistics (as a

semantic network notation, CG can be used to represent meaning and background

knowledge), relational database semantics, cognitive psychology and philosophy

(John showed how CG theory can represent various conceptual structures that

were proposed and developed in cognitive psychology and philosophy). What is of

interest here and in relation with Active Semantic Network (ASN), is that Sowa

showed how CG (which is a general form of static semantic network) can be

"merged" with a dataflow graph

(developed in the field of parallel computation, dataflow graph is an important

formulation and modelization of parallel

computation). Starting with this proposition (the merge of CG and dataflow

graph), A. Kabbaj proposed Active

Conceptual Graph and developed the language SYNERGY which is based on

this extended view of CG. Active CG can be viewed also as a development and a

continuation of ASN. See SYNERGY for detail. At the philosophical and especially

the epistemological level, the main questions

during this period (1970-1986) were : what is knowledge, intelligence,

meta-knowledge and intelligent systems ? we

can summarize the result of the debate around these questions as follows: what is knowledge ?, for human, knowledge is the

result of complex processes based on perception, abstraction, categorization,

concept formation, experience, and learning. Guided by his knowledge and

intention, humans are able to analyze income

information from their perception senses and construct knowledge

("conceptual representations" ?) of the

perceived world. In philosophy, a related topic to this conceptualization

process is the problem of "reference" and "meaning"; the adequation of our mental representations (abstractions) to

the reality, etc. Of course, the conceptualization process is a continuous

process; it is related to other processes and previous knowledge becomes

context for the interpretation of the new knowledge. Intelligent systems of

this period did not have such a complex conceptualization process

; knowledge was given to the system by the programmer (due to this

shortcut, many researchers claimed that those intelligent systems are not

really intelligent, i.e. they are not intelligent like humans). Let us consider

now the second question: what is intelligence?

Of course, this is a difficult and complex question but the kernel for an

answer may be expressed as follows: intelligence is

the capability of a system to know what it knows and to know how to use its knowledge

to achieve its goals. This "condensed response/formula"

highlights the strong relation between

intelligence and meta-knowledge (knowledge about knowledge). It refeers also to the capability of an intelligent system to

use its knowledge in decision making and in achieving its goals. Heuristics,

strategies, meta-rules, meta-goals, meta-plans and context are forms of

meta-knowledge. Indeed, context (in terms of script, frame or any other complex

structures) is a kind of meta-knowledge since it provides the relevant

knowledge that enables the interpretation and the organization of the new

knowledge. In sum, intelligence is based on

knowledge, meta-knowledge and intention. Knowledge, Meta-Knowledge and

Intentional Knowledge (goals, plans, actions, motivation, belief, etc.) were

given to intelligent systems of this period by the programmer(s) as a set of

programs. Thus, intelligence was not an emergent property of the system; it was

an "external intelligence" (provided by the programmer as a set of

programs). The challenge for the next period(s) was to develop systems with

their own intelligence, i.e. systems that can develop their own knowledge from

the perceived world (or environment), that can develop their own meta-knowledge

and their own intentional knowledge.

2.

3.

Period

3 (1980 to 1996) Toward Autonomous Intelligent Systems:

Several threads of research were active in this period : a) one thread

continued to explore knowledge-based systems (to study knowledge structures,

knowledge representation, knowledge meaning or ontology, context,

knowledge-based processes, etc.); b) another thread started to explore the

integrated approach concerning knowledge and processes; c) another thread was

interested in the development of neural networks

and connexionist models and machines to deal with the

capability of the system to perceive its own environment and to get knowledge

from its own perception. "Distributed

knowledge and knowledge without representation" was an important

feature of these models; d) another thread was concerned by the nature of

meta-knowledge and how a system can develop its own meta-knowldge.

Meta-Knowledge can be developed from experiences

and learning: as the system attempts to use its knowlege

to understand, explain, resolve problems and satisfy its goals, the system will

learn from these situations/experiences that become themselves knowledge ! Of

course, memory and learning

are the key components in this process: learning is

a multi-strategy process (various learning strategies: generalization, analogy,

abduction, explanation based learning, etc.) which operates on the new

experiences and integrate them in memory,

involving comparison, modification and connection of the new experiences with

the old ones as well as reorganization and update of the memory. Learned

experiences give the system the context, the meta-knowledge required for an

intelligent behavior; e) another thread of

research in this period was concerned by the source and development of

intentional knowledge: not only goals and plans, but motivation, belief,

emotion and other cognitive categories are required to provide a precise

account of autonomous intentional systems. It became clear that autonomous intentional systems are "situated systems": the "body" and the various dimensions of the environment (society)

have strong impact on the intentional knowledge and capabilities of the system;

f) another thread focused on the interaction and

communication of multi autonomous systems; g) another thread of research

stressed the "evidence" that autonomous system (like human) is first

a reactive system, in addition to being

intelligent, intentional, and interactive. A "really" autonomous

intelligent system should be able to react to any "perturbation" from

the environment. Also, h) another thread of research focused on "collective or emerging intelligence": a

reactive system can not be intelligent by itself but

a community of such reactive systems can exhibit intelligent behavior.

4.

Period

4 (1990 - to the present) Integrated Autonomous Intelligent Agents:

This period is characterized, among other things, by the development of Case-Based Reasoning, Multi-Strategy Learning, Ontology, Agents

and Multi-Agent Systems, Hybrid

Systems (Symbolic and Connexionist), etc.

In this period, all the previous threads has continued to progress. Also, great

efforts have been deployed to achieve integration of different combination of

these threads. Ontology, Agents

and Multi-Agents Systems constitute

the appropriate context to explore these integrations. It becomes clear that AI

tools and platforms should provide an integrated architecture to build

Intelligent Systems and Intelligent Agents.

From

Intelligent System to Intelligent Agents

Above, we noted that Artificial Intelligence

(AI) is a Science of Intelligent Systems. In summary, we can say that

the main goal of AI is the use of computer science and information processing

as a paradigm to model Intelligence in order to develop Intelligent Systems and

Intelligent Agents.

Without going in deep discussions about what are

Intelligent Systems and Intelligent Agents, and what is the difference between

the two, let us say that Intelligent System is any system that incorporates and

performs an intelligent process/task (like problem solving, reasoning,

planning, learning, natural language processing, etc.).

Like the notion of Intelligent System, the notion of

"Intelligent Agent" recieved several

definitions, proposed in the litterature, starting

with a specification of a typologie of agents that

produce different types/categories of Agents. The most important distinction is

between "Cognitive Agent" and "Reactive Agent". A cognitive agent is an agent with cognitive

capabilities (mental states, knowledge, belief, ...

and cognitive processes like reasoning, learning, problem solving, planning,

natural language processing, etc.). Since cognitive agents are considered in

general in the context of Multi-Agents Systems, communication becomes another

important cognitive process. A reactive agent

is an agent with perceptual and acting capabilities with a kind of

Stimulus-Response behavior.

Integrated agent is

an agent that integrates both cognitive and reactive capabilities. Unlike

intelligent system, an "ideal" integrated agent is an agent that

integrates most of the cognitive and reactive capabilities and processes,

becoming closer to Human (considered as an "ideal integrated agent")

than to "a simple intelligent system". In this context, it becomes

mandatory to speak of the architecture

of "intelligent agent". Figure 1 provides an example of such an

architecture. In our proposed architecture, an agent has basically three

components:

·

The kernel

of an intelligent agent, composed of a Dynamic

Memory and their related processes (knowledge dynamic integration

process, information retrieval, etc.), Inference & Reasoning strategies,

and Learning strategies. The content of Dynamic Memory is very rich, complex

and diverse. It includes the agent knowledge of the world (i.e. the agent's

ontology), including knowledge of himself and of other agents, diverse

knowledge bases about various topics and expertises,

and his various experiences.

·

Cognitive

Processes that are based on the kernel; they include Behavior (Problem Solving,

Natural Language Processing, Intentional Planning, and Reactive behavior) and

Understanding and Explaining.

·

Perceptual

processes, Communication and Physical Action. An agent communicate and act upon

the world/environment (and the other agents) through perceptual processes,

communication and physical action.

Figure 1: Architecture of an Intelligent Agent

Amine is a Java Open Source Platform suited for the

development of different types of intelligent systems/agents:

The main aim/goal of Amine is to provide an integrated

architecture to build Intelligent Systems and Intelligent Agents

Amine can be of interest not only for Artificial

Intelligence and Cognitive Science, but also for Semantic Web.

Amine results from more than 20 years of extensive research and work done by

Pr. Adil Kabbaj (1985 to

the present) concerning several topics in Conceptual Graphs (CG) Theory and

AI. See about

for more detail on Amine's background and history.

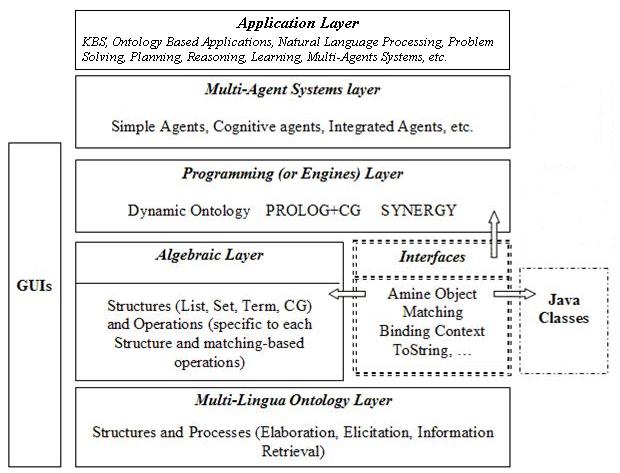

Amine has a

multi-Layer architecture. In the first versions of Amine

(versions 1-4), we adopted a four-layers or levels architecture (Figure 2) :

Figure 2: First version of Amine Architecture

1. The Kernel

level: (Multi-Lingua Ontology

Layer) which offers a possibility to create, edit and ask an ontology defined in terms of Conceptual Structures -CS- (definition, canon,

individual and situation). Several conceptual

lexicons can be associated to such an ontology (i.e. lexicons for

English, French, Spanish, Arabic, etc.) allowing multi-lingua conceptual ontology.

Since an ontology can be shared by several agents with different languages, we

decided to develop a multi-lingua platform, i.e. a multi-lingua ontology and

multi-lingua structures. Thus, users with different languages can express

knowledge in their languages and still share all knowledge expressed in other

languages. This is done in Amine thanks to the separation between conceptual

elements (concept type, concept referent, relation type) and their identifiers

in a specific language. Multi-lingua is one key feature of Amine platform. Another key

feature of Amine is that Amine ontology is

not restricted to a specific description scheme: for the description

of Conceptual Structures (the basic components of Amine ontology), the

developer can use CG, KIF, a Frame-like notation, RDF, XML or any description

scheme that is implemented in Java (and, in preference, that implements some

interfaces of AminePlatform like AmineObject

and Matching). The

kernel level is implemented as a Java package called "kernel" that

contains itself two packages: "lexicons" and "ontology". The

kernel is a “stand-alone” level; the "kernel" package can be used

without the other levels and even without the adoption of any description

scheme (see Ontology and

Samples for more detail on this

possibility). Also, beside the APIs for the creation and use of an ontology

with its associated lexicons, Amine offers a graphic interface, LexiconsOntology

GUI, to browse, create, edit, and update an ontology.

2. (the programming context that

determines how variable binding should be interpreted and resolved, i.e. how to

get the value of a variable). (like Amine structures) In addition to APIs for

the creation and manipulation of Amine structures (including ), Amine platform

provides a graphic interface, CGNotations GUI, which is an MDI, multi-lingua and

multi-notation (LF, CGIF and Graphic) CG editors and another graphic interface,

CGOperations GUI, for the

application of CG operations on specific CGs.

3. Programming (or Engines) level/layer:

Three complementary engines are provided: a) a dynamic ontology engine

that allows an incremental and automatic

formation and update of an ontology, b) PROLOG+CG that is an object based and CG-based extension of Prolog language

and, c) SYNERGY which is a CG-activation and state-propagation based language.

Prolog+CG and Synergy incorporate two complementary

models of processing: an inference-based

processing (Prolog+CG) and an activation/propagation-based processing

(Synergy). These engines are built on top of (use) the lower levels of Amine

and they can be used separately or in combination. For instance, a Case-Base

can be expressed as an Amine ontology and dynamic ontology engine can be used in

conjunction with Prolog+CG to develop Case-Based Systems. In the two previous decades, intelligent processes (reasoning, learning,

understanding, planning, etc.) are defined more precisely as Case-Based or Memory-Based processes; this is evident if we recall

that intelligent processes are knowledge-based processes and knowledge is

contained in and affected by memory. One

important motivation for the development of Amine is to provide a platform for

such a memory-based approach to intelligent

processes. Amine ontology can play the role of the intelligent system's

memory. Indeed, both terminological

(definitional) and situational (cases)

knowledge can be integrated in an Amine ontology. Beside these three

complementary programming paradigms, Amine, provides three basic ontology processes:

elaboration, elicitation

and information retrieval.

4. Multi-Agents Systems level/layer: Amine

provides agents and multi-agents

systems (MAS) APIs that enable the construction of agents and

multi-agents systems. Different types of agents (cognitive, reactive and

integrated agents) and different types of MASs are being considered. Components

of a typical agent in Amine platform can include: an ontology (with lexicons)

that plays the role of the agent’s memory, structures and matching-based

operations to represent and manipulate agent's knowledge structures, the

dynamic ontology engine to perform an automatic and incremental integration in

memory of the continuous stream of information that the agent receives from its

environment, Prolog+CG for the inference-based

processes, and Synergy for the activation and propagation-based processes.

1.

The above four levels (layers) form a hierarchy: each level is built on top of and use

the lower level. However, it is important to note that a lower level can be

used by itself without the higher levels: kernel level/package can be used

directly in any application, algebraic level/packages can be used directly too,

etc. Among the goals (and constraints) that have influenced the design and

implementation of Amine were the goal to achieve a higher level of modularity

and independence between components.

This four-layers architecture

of Amine was redefined, in Amine 4, as a five-layers architecture, where Applications constitutes the fifth layer

(Figure 3).

Figure 3: Applications as a fifth layer in Amine Platform

Amine 5 architecture is refined and extended to seven-layers architecture (Figure 4): two new layers were

added: Knowledge Base Layer and Memory-Based Inference and Learning Strategies Layer.

See Knowledge Base Layer

and Memory-Based Inference and Learning Strategies

Layer for more detail about these two new layers.

Figure 4: Seven-Layers Architecture of Amine Platform

This seven-layers makes the intelligent system/agent

architecture more clear and precise: it is more efficient to make explicit both

the Ontology layer and the Knowledge Base layer, to separate between the two

layers and to make explicit the strong relation between them. In the previous

versions of Amine, the two layers were merged in one layer; an ontology in

Amine may represent an ontology as well as a Knowledge Base.

The Knowledge Base

layer is build upon the Ontology layer (Figure 4), and the two layers constitue the Memory

of the intelligent system/agent. Knowledge

structures and operations are build upon

the Ontology/KB layers and constitute the algebraic layer. Memory-Based Inferences and Learning Layer

provide (Knowledge) inferences and learning strategies that are build upon and use the three first layers. Amine's

Memory-Based Inferences and Learning Layer provides some inference and learning

strategies but it is far from being complete. Process/Programming/Engines

layer is build upon the lower layers and

provides two programming languages (Prolog+CG and

Synergy) to define/develop different intelligent processes (problem solving,

natural language processing, planning, etc.). Agent/Multi-Agent

Systems layer is build upon the lower

layers and incorporates the communication capability between agents.

Amine platform can be viewed as a basis for an Intelligent Virtual Machine; layers/levels of

Amine cover most of the complementary components needed in the development of

intelligent systems/agents with various capabilities. There is a strong

cohesion and complementarity between Amine's layers.

However, it is not mandatory to use all the

seven-layers of Amine in each application or in the development of any

intelligent system/agent: as noted already, a layer requires lower layers, but

it doesn't require the upper layers. Thus, a user may use all packages of Amine

or it can use only those that are required for his/her application.

Agent architecture and Amine

architecture

There is a strong affinity between our proposed

Intelligent Agent achitecture (Figure 1) and the last

version of Amine architecture (Figure 4): The kernel of an Intelligent Agent

corresponds to the two first layers of Amine (Ontology and KB layers), and to

the fourth layer. Note that the third layer of Amine (the algebraic layer) refeers to a fondamental

component that is not explicit or even absent (or hide) in many intelligent

agent architectures: Knowledge/Conceptual Structures and Operations. In

conclusion, the first four layers of Amine allow agent's developer to design

and implement the kernel of an intelligent agent.

Instead of providing specific implementation of

cognitive processes (problem solving, natural language processing, planning,

etc.), Amine provides two programming languages (PROLOG+CG and SYNEGY) that

constitute its Programming/Engine/Process layer. With these languages, and the

other components of Amine, user (developer of intelligent agents) can define

and implement the agent's cognitive processes.

Amine APIs Specification

Amine APIs Specification can be found here.

Genericity

in Amine

One important feature of Amine is the effort to

maximize the genericity

of the platform:

a.

the kernel

(multi-lingua ontology) is generic in the sense that Amine ontology is an

integration of various kinds of ontologies (see Ontology for more detail on this point) and an ontology is

independent from any specific description scheme;

b.

the

algebraic level is generic in the sense that it supports generic structures

(structures with variables) and generic binding context. The algebraic level is

also generic in the sense that it is "open" to Java; it provides

interfaces (AmineObject and Matching) that allow the

integration of new structures to Amine.

c.

the

dynamic ontology engine is generic in the sense that it considers various types

of information. Prolog+CG is generic in its

integration of Amine lower levels, in its object extension of Prolog and its

"openess" to Java, etc.

See Ontology, Structures and CG Operations for further details on Amine's

genericity concerning its first and second levels.

Amine's genericity will be

enhanced in the future.

The "proximity" of

the conceptual/specification level and the implementation level

Great efforts have been made to significantly reduce

the "gap" between the conceptual/specification level and the

implementation level of Amine platform. This is reflected in the architecture

of the source code, in the packages and classes, in the naming and especially

in the APIs that provide several methods that "bring" the conceptual

level to the programming level. Great efforts have been made to produce a very

easy and readable code, very close to the conceptual and specification levels

of the platform. In this way, the code can be easily enhanced, debugged,

updated, modified and extended. A quick look at Amine's APIs and related tests

will give the reader an idea about what we mean by this important feature.

From Amine Platform 1.0 to

Amine Platform 2.0

Amine Platform 2.0 is a complete revision and

refinement of the first version, both at the conceptual and implementation

levels. At the conceptual level, concepts such as language, lexicon, conceptual

structure, ontology, descriptions, and binding context are made clearer in 2.0

than in 1.0. The genericity of Amine is specific to

the new version. Also, structures and operations of Amine have been redefined

and extended. GUIs have also been modified and refined. And in addition to the

three GUIs presented in the previous version (LexiconsOntology GUI,

CGNotations

and CGOperations

GUIs), this new version presents Amine Platform Suite GUI (Figure 5);

a frame that visualizes the whole components of the platform and provides a

direct access to its various GUIs. It also gives, via the Help menu, an access

to the APIs specification, the documentation and the URL to the new Amine Web

Site.

Unlike the previous Amine Web Site version, the

current web site is much more informative and well structured; more

documentation (in addition to the APIs specification) is available on the new

web site.

Figure 5: Amine 2.0 Platform Suite

Note:

Engines and MAS layers will be released in the next versions.

At the implementation level, the code has been

completely rewritten to satisfy many goals: clarity, readability, organization,

and proximity with the conceptual level (the code reflects closely the

conceptual and specification levels). The result is a better naming,

definition, organization, and documentation of methods, classes and packages.

The goal of this effort is to offer an open source that can be easily used,

modified and extended.

The release of Amine Platform 2.0 contains the source

code, the compiled code, tests done on the APIs, samples (currently, the

current version contains only a subdirectory for ontology samples),

documentations (i.e., the APIs specification which has been enhanced), the

deployment of Amine on various platforms, and the new web site.

Inside Amine Platform 2.0: a

quick overview of Amine packages

We provide a quick overview of Amine Platform 2.0

packages. More details can be found in Implementation

issues and in the pages associated to the different

components of Amine. Recall that engines and MAS layers are not included in the

current version.

The organization of Amine's source code reflects its

specification; Amine's source code contains packages that correspond to Amine

levels: kernel package for the kernel level,

util package for

the algebraic level, guis

package for Amine GUIs, and test and samples packages. In the next versions, we will

provide the engines and the Multi-Agents Systems packages.

The kernel package

is composed of two packages: lexicons and ontology packages, the former for Lexicon API and

the latter for Ontology API. The ontology package includes classes that define

the four types of Conceptual Structures (CS) used in Amine Platform: Type, RelationType, Individual and Situation. The Canon CS is

integrated in the Type CS (a type can have a definition and/or a canon).

Ontology API is partitioned over all the classes of the ontology package.

As stressed above, the kernel package (lexicons and

ontology packages) is independent from the other packages and it can be used

alone.

Amine's source code contains also a package called util (like the package

with the same name in Java) where the basic structures of Amine Platform are

defined. An object in Amine can be an elementary

Java object (Integer, Double, Boolean, and String), an elementary Amine object (AmineInteger,

AmineDouble, AmineBoolean,

and Identifier, a CS), an Amine collection object

(AmineSet and AmineList),

and an Amine complex object (Term, Concept, Relation, and CG). Also, any Java

Object that implements AmineObject

and Matching

interfaces becomes an Amine object and can be used as a component of an Amine

structure.

The common operations (clear(),

clone(), toString()) are specified in AmineObject interface.

Another set of common operations are matching-based

operations (match(), equal(), unify(),

subsume(), maximalJoin(), and generalize()). These

operations are specified in the Matching

interface. The two interfaces are implemented by all Amine structures.

util package includes a package, called cg, for the Concept, Relation and CG structures

and their associated operations. util includes also a parserGenerator package

which concerns parsing and textual formulation of all Amine structures.

Amine's source code also contains a guis package which

includes packages for the current GUIs of Amine Platform (lexiconsOntology

GUI, cgNotations GUI, cgOperations

GUI, and aminePlatform GUI). The util Package in guis

contains classes that are used by the various GUIs.

Further details on the implementation are provided in implementation issues and in the pages associated to the different components of Amine

Platform.

From Amine 2.0 to Amine 3.0

See release

of Amine 3 for the difference between Amine 3 and Amine 2.

From Amine 3.0 to Amine 4.0

Amine 4.0 includes Ontology Graphic Editor, Dynamic

Programming in Synergy, MAS layer and several other enhancements in comparison

with Amine 3.0. See release

of Amine 4 for more detail.

From Amine 4.0 to Amine 5.0

Amine 5.0 introduces two new layers: Knowledge Base Layer and Memory-Based

Inference and Learning Strategies layer. It introduces also Rule as a new Conceptual Structure, beside Definition, Canon,

Individual and Situation.

Ontology and KB can contain Rules.

Dynamic Integration Process has been refined in

different "subprocesses", taking into

account the variety of Conceptual Structures and of ontologies and of KB that

can be constructed: definition integration

process, situation integration

process, rule integration process,

heterogenous integration process.

Also, Amine 5.0 makes clear the three modes of

integration process: classification-based

integration process, generalization-based integration process, and information

retrieval integration process.

See release

of Amine 5.0.

From Amine 5.0 to Amine 6.0

Amine 6.0 brings a lot of changes and extensions. See Amine 6.0 release for more detail. Figure 6 shows the updated form of Amine Suite.

Figure 6: Amine 6.0 Platform Suite

From Amine 6.0 to Amine 10

See release archives.

Intelligent

Systems using Amine Platform

Various types of intelligent systems can be developed

using Amine Platform: the three first layers/levels provide tools to develop Knowledge-Based and Ontology-based

systems. With Prolog+CG, intelligent and intentional systems can be

developed also (like inference-based

systems, natural language processing systems, and planning systems). With inference and learning

strategies layer, Amine Platform provides the possibility to build intelligent systems with memory-based inference and

learning capabilities: indeed, dynamic ontology and KB correpond in this context to memory and the dynamic

ontology/KB engines are learning processes that can be extended to integrate

other learning strategies like analogy, abduction and explanation based

learning. Case-Based Systems can be developed using for instance Prolog+CG and the other lower layers. Activation-based systems can be developed using

Synergy language which is an activation/propagation based language suited for

data/event and result/goal driven computations. Last, Multi-Agent Systems

layer/level, that can exploits the lower layers/levels

of Amine, is intended for the development of different types of agents and of

multi-agents systems.

Being implemented in Java, Amine can be viewed as an

extension of the Java Virtual Machine (JVM)

to Java Intelligent Virtual Machine, in

order to implement easily various types of intelligent

systems/agents.

A (sequential) map for a visit of Amine Platform

1. Introduction

PART 1: ONTOLOGY and KNOWLEDGE BASE LAYERS

4. Tests for Ontology and Lexicons

5. Samples for Ontology and Lexicons

6. Ontology GUI

PART 2: ALGEBRAIC LAYER: CONCEPTUAL STRUCTURES & OPERATIONS

8. Structures

9. Elementary objects and Collections

10. Complex

Structures: Term, Concept, Relation, and CG

11. MDI, Multi-lingua and Multi-Notation Editors for CG (GUI)

12. CG Matching-Based Operations

PART 3: MEMORY-BASED INFERENCE AND LEARNING STRATEGIES LAYER

15. Ontology Processes: Elaboration, Elicitation and Information Retrieval

16. Memory-Based Inference and Learning Strategies

Layer

PART 4: PROCESS/PROGRAMMING/ENGINE LAYER

18. SYNERGY language

PART 5: AGENT AND MULTI-AGENT SYSTEMS LAYER

PART 6: APPLICATIONS LAYER

20. Applications